- #USING KAFKA TOOL CREATE DC1 TO DC2 HOW TO#

- #USING KAFKA TOOL CREATE DC1 TO DC2 INSTALL#

- #USING KAFKA TOOL CREATE DC1 TO DC2 PASSWORD#

- #USING KAFKA TOOL CREATE DC1 TO DC2 OFFLINE#

I am not sure how it should be set up or what I can do to fix this problem, I read a bit about this 80/20 set up but I am not sure what I need to do or what it is exactly.

#USING KAFKA TOOL CREATE DC1 TO DC2 OFFLINE#

Some clients will obtain leases from DC1 whilst others will pick up from DC2, however the point to DC2 was to kick in if DC1 goes offline so this current set up is undesirable.

#USING KAFKA TOOL CREATE DC1 TO DC2 HOW TO#

Lets call the PDC DC1 and the redundant DC DC2.ĪD appears to replicate from DC1 to DC2 however I am not sure how this was set up or how to clarify it's configuration.Ĭlients appear to pick and choose which DC they will obtain a DHCP lease from. One is a PDC and one is suppose to be for redundancy, however after some investigation is appears as though the second DC is running more like a load balance server then a fail over as it was originally suppose to be set up as.

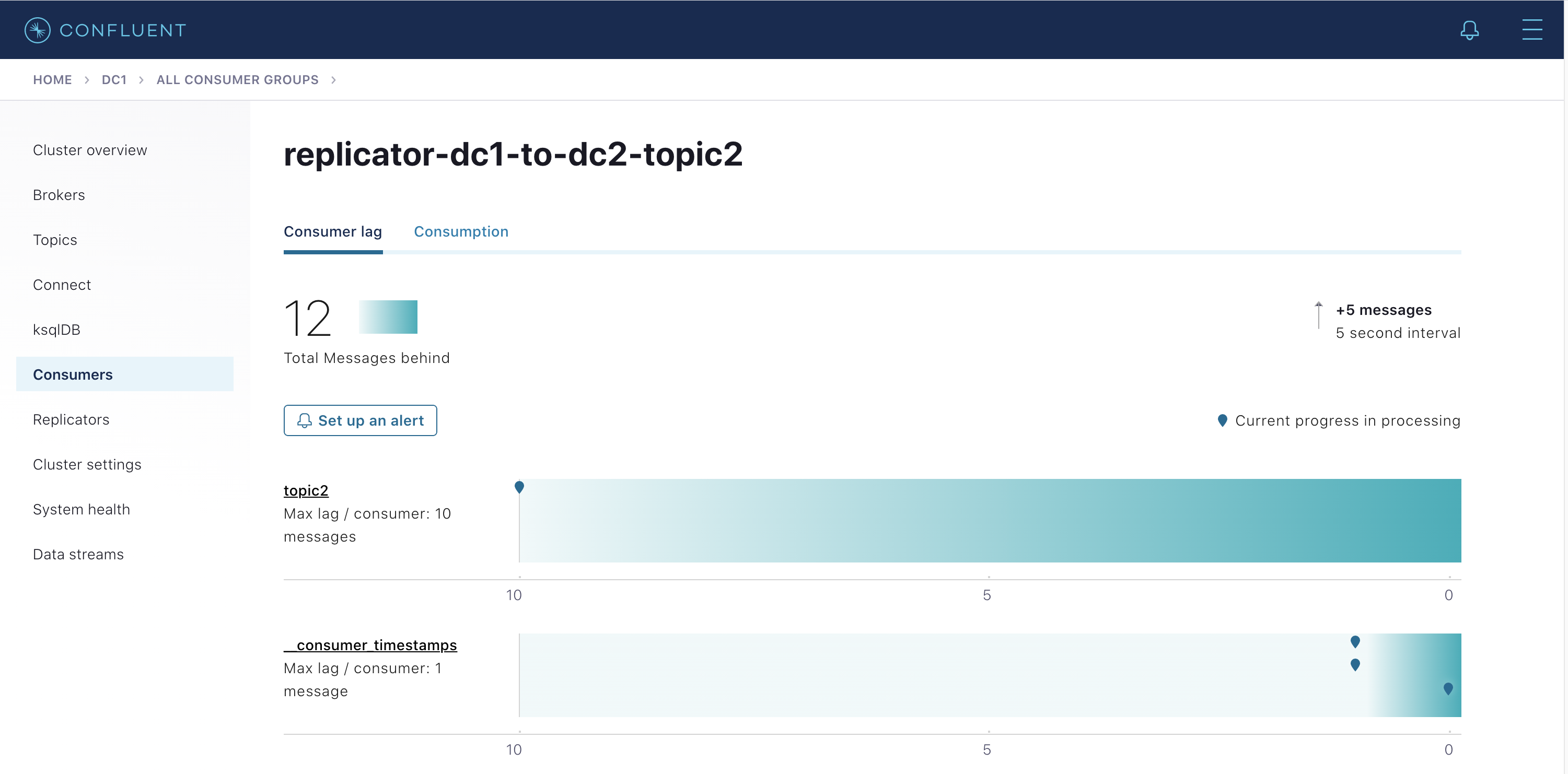

bin/kafka-run-class.sh have a set up where I have two domain controllers. # Describe consumer group and list offset lag related to given group (at source Kafka cluster): bin/kafka-run-class.sh -new-consumer -bootstrap-server sourceKafka01:9092,sourceKafka02:9092 -list bin/kafka-run-class.sh nsumer nfig config/nf -num.streams 2 nfig config/nf -whitelist="mirrored_topic"

#USING KAFKA TOOL CREATE DC1 TO DC2 PASSWORD#

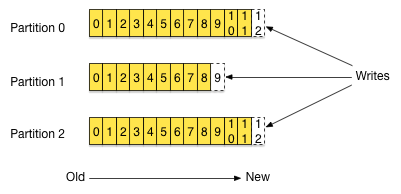

=changeit # The password of the private key in the key store file. This is optional for client and only needed if is configured. =changeit # The store password for the key store file. This is optional for client and can be used for two-way authentication for client. =/work/kafka_2.11-0.10.0.1/ssl/sourceKafkaClusterKeyStore.jks # The location of the key store file. =changeit # ignore if source Kafka cluster is not using SSL =/work/kafka_2.11-0.10.0.1/ssl/sourceKafkaClusterTrustStore.jks # ignore if source Kafka cluster is not using SSL Security.protocol=SSL # ignore if source Kafka cluster is not using SSL Kafka MirrorMaker Configure Kafka MirrorMakerīrvers=sourceKafka01:9093,sourceKafka02:9093 # use plaintext if source Kafka cluster is not using SSL bin/kafka-console-consumer.sh -new-consumer -bootstrap-server localhost:9092 -topic mirrored_topic Start listening to the topic in target cluster using Console Consumer.bin/kafka-topics.sh -zookeeper localhost:2181 -create -if-not-exists -replication-factor 1 -partitions 1 -config 'retention.ms=3600000' -topic mirrored_topic bin/kafka-topics.sh -zookeeper localhost:2181 -list Listeners=PLAINTEXT://localhost:9092,SSL://localhost:9093 # Timeout in ms for connecting to zookeeper # The interval at which log segments are checked to see if they can be deleted according to the retention policies When this size is reached a new log segment will be created. # The maximum size of a log segment file. # The minimum age of a log file to be eligible for deletion # A comma separated list of directories under which to store log files # The maximum size of a request that the socket server will accept (protection against OOM) # The receive buffer (SO_RCVBUF) used by the socket server # The send buffer (SO_SNDBUF) used by the socket server # The number of threads handling network requests This must be set to a unique integer for each broker.

Select appropriate version and download its tgz from Kafka Downloads page.

#USING KAFKA TOOL CREATE DC1 TO DC2 INSTALL#

Download and install Kafka (target cluster).Check MirrorMaker.scala for more details.